Originally published on the InterWorks blog.

I’ll admit something upfront: I’m uncomfortable with the term “data science” — and it’s in my job title.

Five years ago, Harvard Business Review called it the “sexiest job of the 21st century.” That label sparked enormous investment in statistics, machine learning, and analytical talent across nearly every industry. Companies hired. Universities launched programs. Salaries soared.

And then things started to crack.

The Sexiest Job in Data

The hype created real problems. Anyone who has a pulse and has had a statistics class labels themselves as a data scientist, which has diluted the field’s credibility. Companies rebrand traditional reporting as “data science” to participate in the trend. Meanwhile, organizations that made significant investments are now questioning their ROI — and for good reason.

Some organizations have achieved impressive results. But the field has accumulated a lot of noise, and separating signal from signal is harder than it should be. This post is about the failure side of that equation — specifically, why data science projects fail. Part Two will address what actually works.

Why Do Data Science Projects Fail?

After years leading data science engagements, I’ve seen the same root causes appear over and over. Here are the five that matter most.

1. Management

Data science requires a fundamentally different management framework than traditional reporting or software development. In conventional projects, success can be defined, scoped, and guaranteed. In data science, you are doing research. Outcomes are probabilistic, not certain.

When organizations measure data science like they measure development sprints — fixed scope, fixed timeline, fixed deliverable — they set themselves up to fail. The work doesn’t fit that box.

2. Data

Three distinct data problems kill projects:

- Poor quality: Garbage in, garbage out. This is as true in data science as it ever was in traditional analytics.

- Limited availability: Data exists somewhere in the organization, but getting access to it is impossible, politically or technically.

- Insufficient performance: Slow data environments create bottlenecks that make exploratory analysis impractical.

3. Tools

Outdated infrastructure constrains what data scientists can actually accomplish. Modern statistical and machine learning platforms require compute, flexibility, and access to current libraries. Organizations that restrict their data scientists to legacy tools are tying one hand behind their backs.

4. Organizational Attitudes

This is the hardest one. Leadership often prefers intuition over data-driven decisions — especially when the data contradicts their experience or instincts. Building genuine trust in algorithmic recommendations requires significant cultural work over time.

There’s also a subtler version of this problem: organizations treat data science as an isolated IT function rather than as a business function embedded in decision-making. That separation is corrosive.

5. Data Scientists’ Responsibility

The profession itself bears some responsibility here. Practitioners have a tendency to over-complicate. We pursue elegant or technically impressive solutions when a simple approach would solve 80% of the problem in a fraction of the time. We build models that business stakeholders don’t understand and can’t use.

Analysis done for the sake of analysis produces nothing. Data scientists need to stay relentlessly focused on communicating value in terms that matter to the business.

Increasing Your Return on Investment

Three recommendations that apply broadly:

Set realistic expectations. Before embarking on any data science initiative, honestly assess your organization’s data foundation. What do you actually have? What’s accessible? What’s clean? The answer to those questions determines what’s possible — and you need to know that before making promises.

Hire carefully. The field is full of people who can perform the tasks of data science but cannot connect their work to business value. Vetting for that connection — asking for examples, running trial projects — saves enormous pain downstream. Internal staff may also need significant training to reach the level where they can contribute meaningfully.

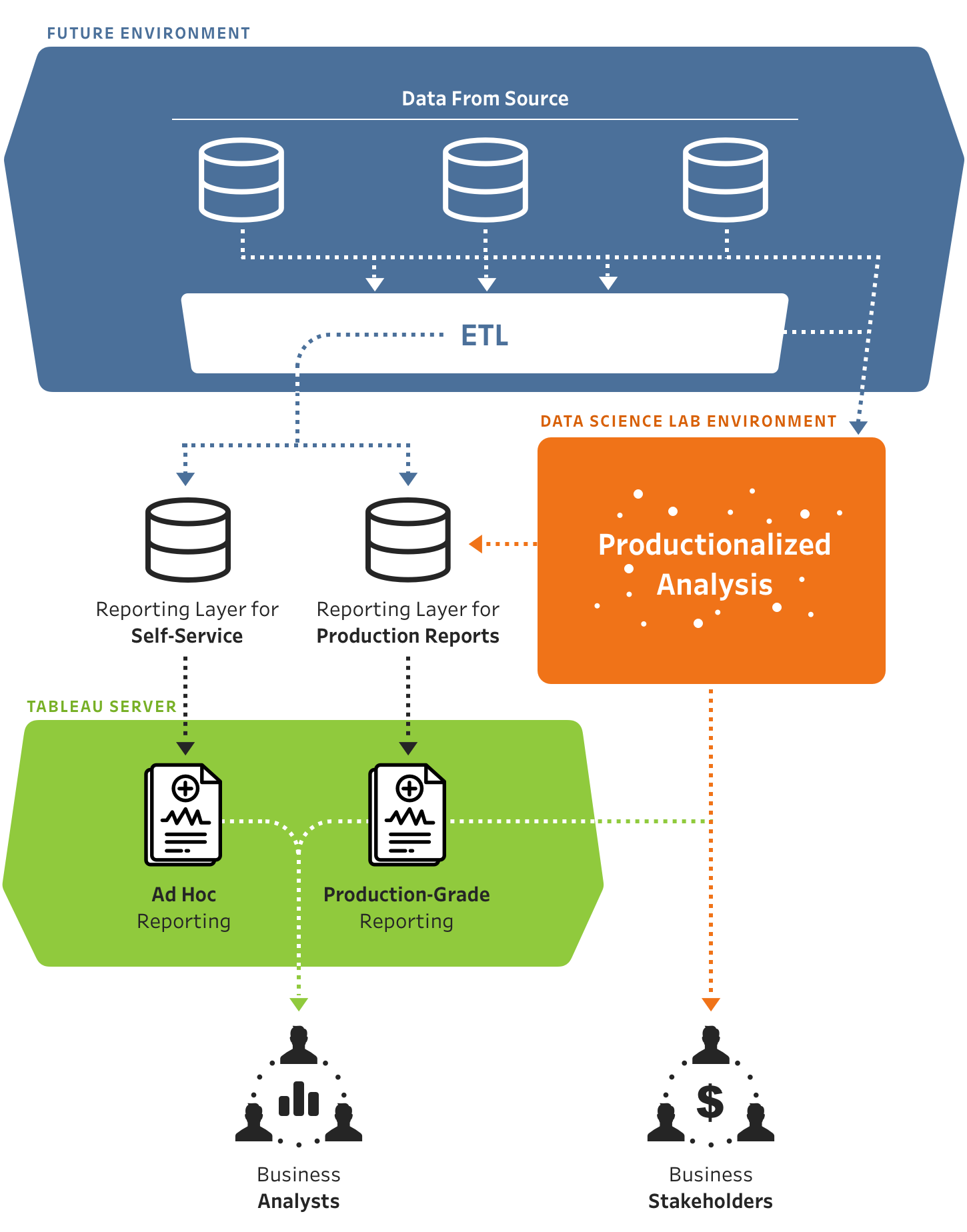

Build new engagement models. Stop treating data science as a service desk that receives requests and returns outputs. The best engagements I’ve seen treat analytical work as a collaborative function where business stakeholders and data scientists work together from problem definition through solution deployment. That requires establishing new protocols and new norms — but the results justify the investment.

Part Two of this series focuses on the positive: how to build data science organizations that actually succeed.